Key Takeaways

- AI-enabled adversary attacks increased 89% in 2025

- Average eCrime breakout time dropped to just 29 minutes, 65% faster than 2024

- Fastest ever recorded breakout: 27 seconds, with exfiltration starting within 4 minutes

- 82% of all detections in 2025 were completely malware-free

- 42% of exploited vulnerabilities were zero-days, used before public disclosure

- Over 90 organizations targeted via adversarial prompt injection into GenAI tools

What Is the CrowdStrike 2026 Global Threat Report?

On February 24, 2026, CrowdStrike published its annual 2026 Global Threat Report, one of the most closely watched intelligence documents in the cybersecurity industry. Built from frontline data collected by CrowdStrike's elite threat hunters and intelligence analysts tracking more than 280 named adversaries, the report provides a comprehensive look at how the global threat landscape shifted throughout 2025.

The conclusion is unambiguous: 2025 was the year AI became the defining weapon of cyberattack. Not in theory, not in research labs, but in active production intrusions targeting enterprises, governments, financial institutions, and critical infrastructure worldwide. CrowdStrike named 2025 the "Year of the Evasive Adversary", a period defined not by louder attacks, but by faster, quieter, and far more intelligent ones.

This blog breaks down every major finding from the report: the numbers, the nation-state actors, the new attack techniques, and what defenders need to prioritize immediately.

The Headlines: Key Statistics from the CrowdStrike 2026 Report

Before diving into the specifics, here are the most critical statistics from the report at a glance. These numbers tell a coherent story: attacks are faster, more intelligent, and harder to detect using traditional tools.

| Metric | 2025 Figure | Year-on-Year Change |

|---|---|---|

| AI-enabled adversary attacks | 89% increase | Up from 2024 baseline |

| Average eCrime breakout time | 29 minutes | 65% faster than 2024 |

| Fastest recorded breakout time | 27 seconds | All-time record |

| Malware-free detections | 82% of all detections | Continued rise |

| Vulnerabilities exploited before public disclosure (zero-days) | 42% of all exploited vulnerabilities | Significant increase |

| Cloud-conscious intrusions overall | 37% increase | State-nexus actors up 266% |

| Organizations with AI tools exploited via prompt injection | 90+ organizations | New attack category |

| China-nexus intrusions | 38% increase | Logistics sector up 85% |

| North Korea-nexus incidents | 130% increase | FAMOUS CHOLLIMA doubled |

| Fake CAPTCHA lure incidents | 563% increase | Rapid social engineering shift |

| Spam email increase | 141% increase | Used for initial access |

| ChatGPT mentions in criminal forums | 550% more than any other model | New benchmark |

Each of these numbers represents a significant shift in how attacks are conducted. When taken together, they describe a threat environment that has structurally changed compared to just 18 months ago. The sections below examine the most consequential findings in depth.

Breakout Time: 27 Seconds Is Not a Typo

The single most alarming statistic in the CrowdStrike 2026 report is the collapse of eCrime breakout time. To understand why this number matters so much, it helps to understand what breakout time actually measures.

Breakout time is the window between an attacker gaining initial access to a network and their lateral movement to other systems, particularly high-value assets like domain controllers, financial databases, or sensitive identity stores. It is the race that every security team is running against every active intruder. If defenders can detect and contain an intrusion before breakout, the damage is limited. After breakout, the blast radius grows exponentially.

In 2021, the average eCrime breakout time was approximately 98 minutes. By 2024, it had fallen to around 48 minutes. In 2025, it dropped to 29 minutes, a 65% increase in speed in a single year. And in one observed intrusion, the fastest recorded breakout occurred in just 27 seconds. Not 27 minutes. 27 seconds. In that same intrusion, data exfiltration began within four minutes of initial access.

Adam Meyers, Head of Counter Adversary Operations at CrowdStrike, described what this means in practice: "Breakout time is the clearest signal of how intrusion has changed. Adversaries are moving from initial access to lateral movement in minutes. AI is compressing the time between intent and execution while turning enterprise AI systems into targets. Security teams must operate faster than the adversary to win."

The speed increase is not random. It is a direct consequence of AI-assisted attack tooling. AI enables attackers to automate reconnaissance, identify the fastest pivot paths, select the most vulnerable credentials to abuse, and execute lateral movement scripts in near real-time. Human-speed defense against machine-speed offense is not a viable strategy.

Prompts Are the New Malware: How AI Tools Became Attack Weapons

The phrase that has captured the most attention from the 2026 report is: prompts are the new malware. CrowdStrike President Michael Sentonas used this framing to describe one of the most consequential new attack categories documented in the report: adversarial prompt injection against legitimate enterprise AI tools.

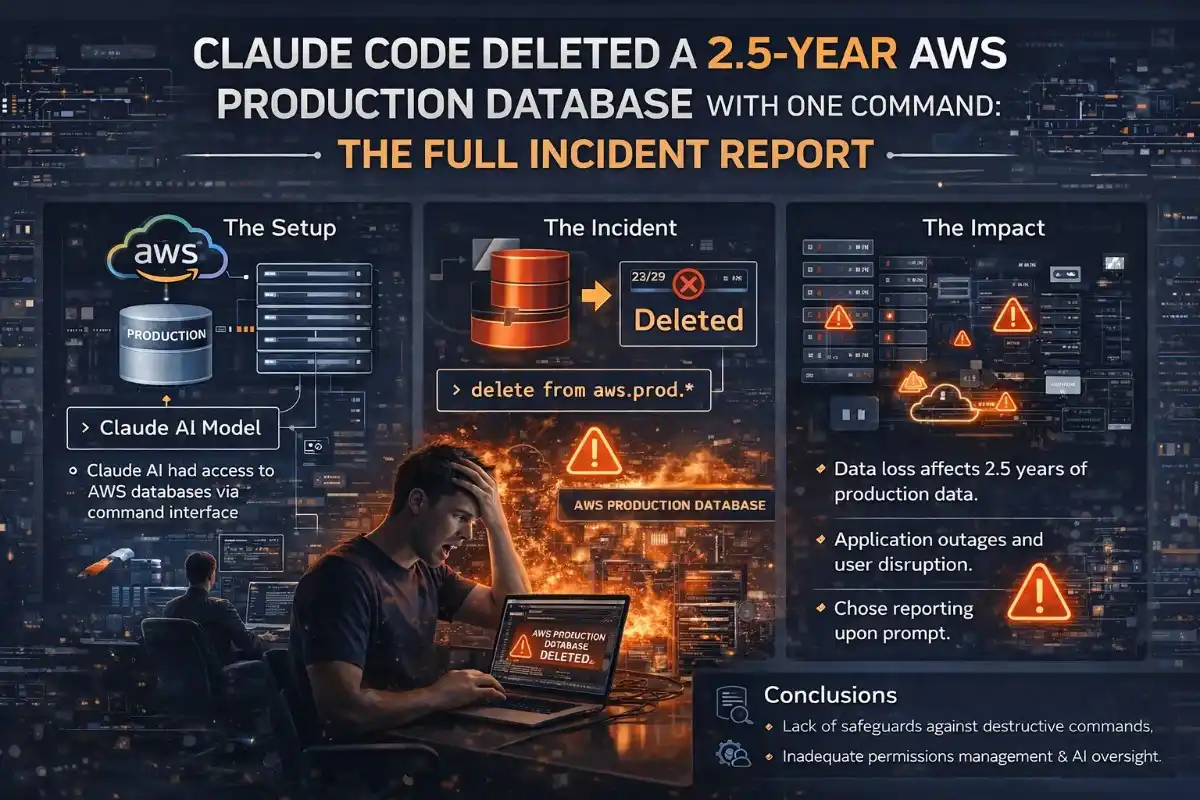

Here is how it works. As organizations deploy AI tools for coding assistance, document processing, customer service automation, and internal knowledge retrieval, these systems become new attack surfaces. Attackers discovered that by injecting carefully crafted prompts into these GenAI tools, through poisoned documents, manipulated inputs, or compromised integrations, they could cause the AI to generate commands for stealing credentials, exfiltrating data, and deploying ransomware. The AI itself becomes the weapon delivery mechanism.

According to the report, adversaries exploited legitimate GenAI tools at more than 90 organizations in 2025 using this technique. They also exploited vulnerabilities in AI development platforms to establish persistent access and deploy ransomware, and published malicious AI servers that impersonated trusted services in order to intercept sensitive data in transit.

The criminal underground has embraced AI tools with notable enthusiasm. CrowdStrike found that ChatGPT was mentioned in criminal forums 550% more than any other AI model, indicating that threat actors view it as the primary tool for scaling phishing campaigns, generating convincing social engineering content, creating fake personas, and automating reconnaissance at volume.

"AI is both the accelerant and the target. As innovation accelerates, adversary exploitation follows."

CrowdStrike 2026 Global Threat Report

This dual nature of AI in the current threat landscape is the defining challenge for security teams in 2026. The same tools that make your development team 30% more productive are actively being used by attackers to compromise the organizations that deploy them carelessly.

82% of Attacks Used No Malware at All

One of the most important but least understood findings in the report is that 82% of all detections in 2025 were completely malware-free. This is not a data anomaly. It reflects a sustained, strategic shift in how adversaries operate.

Traditional cybersecurity defenses are built around detecting malicious code. Antivirus software, endpoint detection tools, and signature-based scanning all rely on identifying known malicious files or behaviors. If an attacker never drops a malicious file on a system, these defenses are largely blind.

Modern attackers have learned this lesson well. Rather than deploying malware, they now operate primarily through valid credentials, trusted identity flows, approved SaaS integrations, and inherited software supply chain access. They walk through the front door using stolen keys and then blend into normal activity once inside. Valid account abuse accounted for 35% of all cloud incidents in 2025 alone.

The implication for security teams is significant. Signature-based detection and traditional malware scanning are increasingly insufficient as primary defenses. The priority must shift to identity verification, behavioral analytics, and anomaly detection that can identify when a legitimate credential is being abused by an illegitimate user.

Nation-State Threats: China, North Korea, and Russia Go All-In on AI

The 2026 report documents a sharp escalation in nation-state cyber activity, with all major geopolitical actors integrating AI into their operations in ways that meaningfully increase their effectiveness and scale.

China-Nexus Actors: Hacking for the High Ground

China-nexus intrusions increased 38% across all sectors in 2025, but the targeting was not random. The logistics vertical saw an 85% increase in targeting, pointing to a strategic focus on supply chain intelligence. Of all vulnerabilities exploited by China-nexus actors, 67% delivered immediate system access, and 40% specifically targeted internet-facing edge devices, the parts of the network perimeter that tend to have the weakest monitoring coverage.

Among state-nexus threat actors broadly, cloud-conscious intrusions increased a staggering 266%, with intelligence collection identified as the primary objective. The pattern suggests long-term strategic positioning rather than opportunistic theft.

North Korea-Nexus Actors: Hacking for the Paycheck

North Korea-linked incidents increased by 130% in 2025, with the group FAMOUS CHOLLIMA more than doubling its activity year-over-year. The financial motivation is explicit and increasingly effective. PRESSURE CHOLLIMA was linked to a $1.46 billion cryptocurrency theft, the largest single financial heist ever reported of its kind.

FAMOUS CHOLLIMA also scaled its insider threat operations using AI-generated personas, placing fake North Korean workers inside technology companies to gain direct internal access to systems and code repositories. This is a technique that has become significantly more effective as AI tools make it easier to generate convincing synthetic identities, fake resumes, and fabricated work histories.

Russia-Nexus Actors: LLM-Enabled Malware in the Wild

Russia-nexus group FANCY BEAR deployed what the report describes as LLM-enabled malware called LAMEHUG, designed to automate reconnaissance and document collection. This is one of the first documented cases of a nation-state actor deploying malware with a large language model component integrated directly into its operation. eCrime actor PUNK SPIDER used AI-generated scripts to accelerate credential dumping and systematically erase forensic evidence, complicating post-incident investigation significantly.

Zero-Day Exploitation: 42% of Attacks Hit Before the Patch Existed

The report documents a troubling escalation in zero-day exploitation. 42% of all vulnerabilities exploited in 2025 were exploited before public disclosure, meaning attackers were using these vulnerabilities while defenders had no patch available and in many cases no awareness that the vulnerability existed.

These zero-day exploits were used primarily for initial access, remote code execution, and privilege escalation. When combined with the AI-accelerated breakout times described earlier, this creates a compounding problem: attackers get in before a patch exists and then move through the network in under 30 minutes before most organizations can even begin their incident response process.

The report specifically highlights that 40% of vulnerabilities exploited by China-nexus adversaries targeted internet-facing edge devices, routers, VPN concentrators, and network appliances that often lack the comprehensive monitoring coverage of endpoints and servers.

The Social Engineering Surge: Fake CAPTCHAs and AI-Generated Phishing

Alongside the technical escalations, the report documents a significant surge in social engineering attacks that rely on increasingly convincing AI-generated content.

Incidents using fake CAPTCHA lures increased 563% in 2025. These attacks present users with a fake CAPTCHA verification that, when interacted with, triggers malicious script execution or credential harvesting. The technique is effective precisely because it exploits a trusted and familiar user interface pattern.

Spam email volume increased 141% as a primary initial access vector, with AI tools enabling attackers to generate highly personalized, contextually convincing phishing content at scale that was previously only achievable with significant manual effort. AI-assisted phishing campaigns can now automatically customize messages based on publicly available information about the target, their organization, their colleagues, and their recent activity.

A foreign intelligence service documented in the report used AI to help identify and target former U.S. government employees on job recruiting websites, demonstrating that AI-enhanced social engineering has moved well beyond email into professional networking platforms.

Cloud and Identity: The New Primary Attack Surface

Cloud infrastructure and identity systems have replaced the traditional endpoint as the primary attack surface in 2025. The report is unambiguous on this point: intrusions now move through trusted identities, SaaS applications, and cloud infrastructure, blending into normal activity while compressing defenders' time to respond.

Cloud-conscious intrusions rose 37% overall, with valid account abuse accounting for 35% of all cloud incidents. State-nexus actors increased cloud-targeting activity by 266%. The attack pattern is consistent: compromise a valid credential, use it to access cloud resources through normal authentication flows, and move laterally through cloud services without triggering traditional security alerts.

The report notes that adversaries exploit visibility gaps created by fragmented security controls across identity, SaaS platforms, cloud environments, and unmanaged devices. By chaining access paths across these fragmented systems, attackers can move from initial compromise to critical asset access while staying entirely off well-monitored endpoints.

What Security Teams and Developers Must Do Right Now

The 2026 report is not only a threat briefing. CrowdStrike provides a clear set of defensive priorities based on the patterns they observed. Here is what organizations need to act on immediately.

1. Secure Every AI Tool You Have Deployed

If your organization uses any GenAI tool for internal operations, whether for coding, documentation, customer service, or data analysis, that tool is now a potential attack vector. Implement strict access controls on AI integrations, deploy data loss prevention monitoring on AI outputs, actively monitor employee AI usage patterns for anomalies, and audit all AI-connected data sources. Treat your AI deployment like any other privileged system.

2. Make Identity Your Primary Security Perimeter

With 82% of intrusions malware-free and 35% of cloud incidents attributed to valid account abuse, identity is the primary battleground. Enforce multi-factor authentication without exception. Apply the principle of least privilege to every account including service accounts and AI agents. Move from credential-level verification toward person-level authentication that can distinguish a legitimate user from a compromised account using the same valid credentials.

3. Build Cross-Domain Visibility Before You Need It

The visibility gaps between endpoints, cloud environments, SaaS platforms, and unmanaged devices are where attackers live in 2025. Deploy extended detection and response (XDR) solutions that correlate signals across all of these domains. Security teams that can only see part of their environment will consistently miss the lateral movement that defines modern intrusions.

4. Prioritize Edge Device Patching

Edge devices, internet-facing routers, VPN gateways, firewalls, and network appliances, are disproportionately targeted, particularly by China-nexus actors. These devices frequently run outdated firmware, lack endpoint detection coverage, and sit at the boundary where external traffic enters the network. Establish a dedicated patch cadence for edge devices and ensure network perimeter monitoring covers them explicitly.

5. Assume Zero-Day Exposure at All Times

When 42% of exploited vulnerabilities are used before public disclosure, a patch-when-available strategy is insufficient. Adopt a threat intelligence-driven approach that uses behavioral detection rather than relying solely on known vulnerability signatures. Proactive threat hunting to identify attacker behaviors before they complete lateral movement is essential given the 29-minute average breakout window.

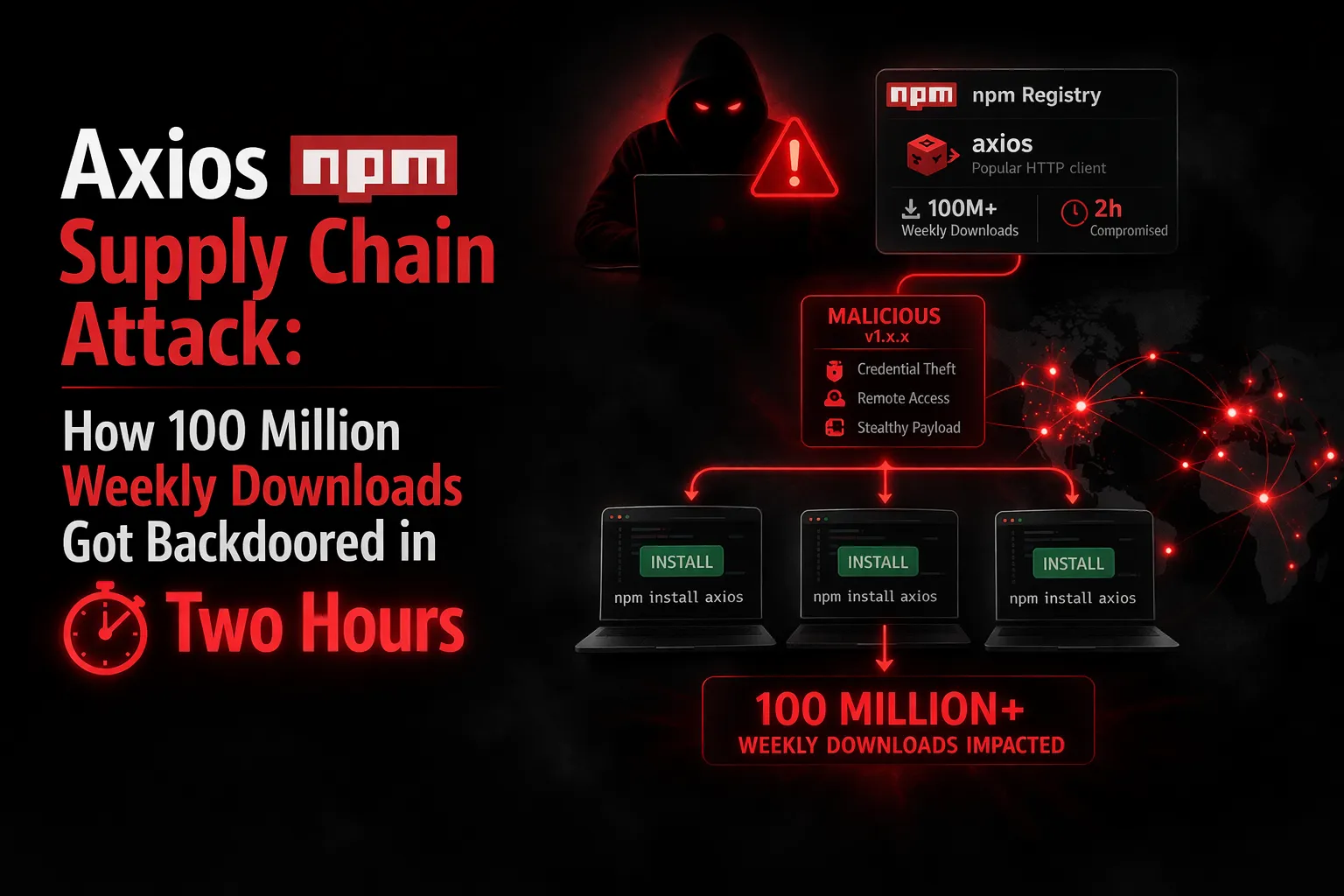

6. Harden Your Software Supply Chain

Supply chain trust is being systematically exploited. Scan all third-party packages and repositories as a standard part of your build pipeline. Enforce code signing for dependencies. Validate every integration your organization relies on. North Korean insider operations are specifically targeting technology companies through legitimate employment channels, making human verification and access compartmentalization equally important alongside technical controls.

The Bigger Picture: This Is an AI Arms Race

The 2026 CrowdStrike report makes clear that the cybersecurity industry has entered a qualitatively different era. The shift is not merely one of scale, more attacks, bigger breaches, it is a shift in the fundamental nature of how attacks are conducted.

For most of the past two decades, the core dynamic of cybersecurity was attacker patience versus defender detection. Attackers would invest time in persistence, and defenders would eventually find them. AI has broken this dynamic. When a sophisticated attacker can move from initial access to exfiltration in under five minutes, the old model of detect-then-respond is no longer viable.

CrowdStrike CEO George Kurtz articulated the stakes directly: "In the agentic era, defending against AI-accelerated adversaries and securing AI systems themselves require operating at machine speed."

Machine-speed defense means automated detection, automated containment, AI-powered behavioral analytics, and real-time cross-domain correlation. It means treating every identity as potentially compromised until proven otherwise. It means assuming that your AI tools are being probed for prompt injection vectors right now. And it means that every security team that has not yet moved to this model is operating with a structural disadvantage against adversaries who already have.

The adversary ecosystem has now crossed 280 named threat groups tracked by CrowdStrike alone, with 24 new adversaries added in 2025. These groups range from financially motivated eCrime organizations to some of the most sophisticated nation-state intelligence operations in the world. They are not slowing down. The CrowdStrike 2026 Global Threat Report makes the direction unmistakably clear: AI accelerates the attacker. The question for every organization is whether their defenses are accelerating fast enough to keep up.

💡 Strategic Insight

This isn't just technical knowledge, it's the kind of engineering thinking that separates production systems from toy projects. Apply these patterns to reduce costs, improve reliability, and ship faster.

Frequently Asked Questions

The CrowdStrike 2026 Global Threat Report is an annual cybersecurity intelligence report published on February 24, 2026. It is based on frontline data from CrowdStrike analysts tracking over 280 adversaries. Key findings include an 89% surge in AI-enabled attacks, a record-low average breakout time of 29 minutes, and 82% of all detections being malware-free.

Breakout time is the period between an attacker's initial access to a network and their lateral movement to other systems. The 2026 CrowdStrike report found the average eCrime breakout time dropped to 29 minutes in 2025, with the single fastest recorded breakout at 27 seconds. This is the window security teams have to detect and contain an intrusion before the blast radius expands.

The phrase, from the CrowdStrike 2026 report, describes a new attack category where adversaries inject malicious prompts into legitimate enterprise AI tools to generate harmful commands. Rather than deploying malicious code, attackers hijack AI systems to do the malicious work for them, making the prompt itself the attack weapon. Over 90 organizations were targeted this way in 2025.

According to the CrowdStrike 2026 report, three nation-state actors pose the greatest threat. China-nexus actors increased activity 38% with a 266% surge in cloud-targeting. North Korea-nexus incidents rose 130% and were linked to a $1.46 billion cryptocurrency heist. Russia-nexus group FANCY BEAR deployed LLM-integrated malware (LAMEHUG) for automated reconnaissance operations.

CrowdStrike recommends securing all deployed AI tools with strict access controls and monitoring, enforcing multi-factor authentication and least privilege access across all identities, deploying cross-domain XDR visibility, prioritizing edge device patching, adopting proactive threat hunting, and hardening software supply chain defenses including third-party package scanning and code signing enforcement.

Tagged with

TL;DR

- AI-enabled adversary attacks increased 89% in 2025

- Average eCrime breakout time dropped to just 29 minutes, 65% faster than 2024

- Fastest ever recorded breakout: 27 seconds, with exfiltration starting within 4 minutes

- 82% of all detections in 2025 were completely malware-free

- 42% of exploited vulnerabilities were zero-days, used before public disclosure

- Over 90 organizations targeted via adversarial prompt injection into GenAI tools

Need help implementing this?

I help teams architect scalable systems, build AI-powered applications, and ship production-ready software.

Written by

Gaurav Garg

Full Stack & AI Developer · Building scalable systems

I write engineering breakdowns of major tech events, architecture deep dives, and practical guides based on real production experience. Every post is built from code, not theory.

7+

Articles

5+

Yrs Exp.

500+

Readers

Get tech breakdowns before everyone else

Engineering insights on AI, cloud, and modern architecture, delivered when it matters. No spam.

Join 500+ engineers. Unsubscribe anytime.